Prologue

Like many companies, some of Comnet’s internal tools being used were built since the stone age, we do continually updating the databases however barely touch the application software itself. Ironically, we are strong believers in the ‘if it ain’t broke, don’t fix it’ concept, so these legacy internal applications may continue to live to the next decade! Another irony is we are too busy serving clients and customers to their satisfaction according to — serve the customer first — concept!

photo from flickr by Roland Tanglao (https://www.flickr.com/photos/roland/)

Anyway, let’s go to my problem, yesterday our Apache2 (version: 2.0.55, built on Jul 26th, 2006) running on an ancient Ubuntu (6.06.1 LTS) virtual machine failed to start properly. The symptom was that nobody could access HTTP service after a long power outage during the weekend. As a good citizen, I logged in to the server, checked that Apache2 process had started then performed ‘netstat -a’ (or ‘ss’ if you like) and found port 80 had been occupied by Apache2. Everything looked quite normal, so, I restarted the service (had to use the old ‘/etc/init.d/apache2 restart’) to make sure there had been no hiccups or errors in the previous start, yet after restart, Apache2 still could not provide the HTTP service (it even could not completely start). I also noticed that there was a single ‘/usr/sbin/apache2 -k start’ entry when ‘ps ax’, instead of multiple pre-forked processes as usual.

I took a look at /var/log/apache2/error.log and found a message

[notice] Digest: generating secret for digest authentication ...

After few the Ubuntu and apache2 restarts (I felt so dumb doing these!), the log showed multiple lines of the same message

[notice] Digest: generating secret for digest authentication ... [notice] Digest: generating secret for digest authentication ... [notice] Digest: generating secret for digest authentication ...

After the, yet another dumb, restart the message became

[notice] Digest: generating secret for digest authentication ... [notice] Digest: done [notice] Apache/2.0.55 (Ubuntu) DAV/2 SVN/1.3.1 PHP/5.1.2 configured -- resuming normal operations

And the service now ‘resuming normal operations’. What???

photo from flickr by Kenny Louie https://www.flickr.com/photos/kwl/

What just happened?

To kill my curiosity, I started to search for the cause using the (sort of) error message as the keyword and there were a lot of discussions about similar error, however, one from Linux Administrator, a WordPress site with URL ‘https://linadmin.wordpress.com/2009/06/22/apache2-hangs-with-digest-generating-secret-for-digest-authentication/‘ or will be called article1 from now, exactly matched the problem I had. The article mentioned that he has SSL enabled while in my case SSL was set to disable. The article goes on to mention that Linux /dev/random uses the entropy collected from the environment such as a keyboard, audio etc.

The author further concludes that due to the lack of entropy in the system caused the /dev/random to block (do not return value to the software calling it) and hence /dev/urandom that never block should be used instead to ensure that Apache2 will not pause during the start.

Wait, what is the entropy?

Entropy generally refers to the level of chaos or randomness of a system and most of us learn this word from basic thermodynamics class. Entropy has similar meaning when in information technology word and typically refer to as Information Entropy which (from Wikipedia) is defined as the average amount of information produced by a stochastic source of data.

In a computing system, randomness has a lot of applications especially in the security arena, for instance, cryptographic related applications (SSL/TLS, encryption etc.) or to prevent guessing of certain information (increase unpredictability) such as TCP initial sequence number (ISN) or ASLR (Address Space Layout Randomization). Linux provides random numbers to applications or systems that need them through the /dev/random and /dev/urandom device drivers.

Random numbers can be generated using Pseudorandom Number Generator algorithm which can provide sufficient randomness for most applications, however, to further strengthen unpredictability and randomness, Linux system is designed to collect randomness value from the environment, for example, inter-keyboard activity, audio noise or inter-interrupt timing, then converts them to digital values and stores them in ‘entropy pool’. The /dev/random, the user interface for random number generation in Linux kernel, fetches and transforms data in entropy pool and return the final information to the application that requested the random bits. For /dev/random, entropy will be depleted at the same amount as one it gives to applications and to maintain the level of non-deterministic, /dev/random will wait if there are insufficient entropy bits to supply to the application. The ‘entropy_avail’ is the (estimated) counter that reports the number of bits available in the ‘entropy pool’ and can be one source for Linux administrator to understand certain problems that may look mysterious.

Applying theory to my problem!

In my case, the situation is a bit different, the SSL was disabled yet I still experiencing the problem. And after looking at the source code of mod_auth_digest.c, the same version running on my Ubuntu 6, it became clear that the ‘initialize_secret()’ needs to access random number from the system, and by default, the /dev/random will be the source. Hence if the system ran out of entropy then /dev/random will block and, as the result, mod_auth_digest also was blocked, the final result is Apache2 initialization routine could not get pass mod_auth_digest and could not complete providing service! When looking at the entropy availability via

# sudo cat /proc/sys/kernel/random/entropy_avail

I found that the system only has less than 100 bit available (skimming through mod_auth_digest source code, it needs 20-bytes (160 bits) from /dev/random to initialize the module). Somehow, eventually, Apache2 completed the initialization phase albeit taking longer time than usual. This situation should be acceptable if apache2 had not need to start/restart during office hours and everybody was waiting for the service!

I did some more research on the topic and found that running old version of Linux on virtual machine (ESXi in my case) has potential to experience the problem more often than on the real hardware as the virtual machine may not be able to collect entropy as fast as the system and applications need (imagine that the virtual machine hypervisor will not generate audio and other noises Linux collects as entropy that real machine can). This is especially true if /dev/random is used because /dev/random generates one bit of random digit at the expense of a single bit of entropy and will block if insufficient entropy bit is not available. On the contrary, /dev/urandom will continue to generate random number even if there are not enough entropy bits left.

So, it becomes obvious that /dev/urandom should be used instead of /dev/random, and in general, the random number generated by /dev/random and /dev/urandom will have similar quality as they are based on the same CSPRNG (Cryptographically Secure Pseudo Random Number Generator) algorithm used in kernel (was SHA-1 and more recent kernel uses ChaCha20 instead) . See http://www.2uo.de/myths-about-urandom/ for more information. In order to use /dev/urandom instead of /dev/random, rng-tools is needed, please see reference 1 on how to achieve this. Another option if you insist to use /dev/random is to have Entropy generation daemon such as Haveged or Timer Entropy Daemon if rng-tools is not available to you.

How much entropy_avail is sufficient?

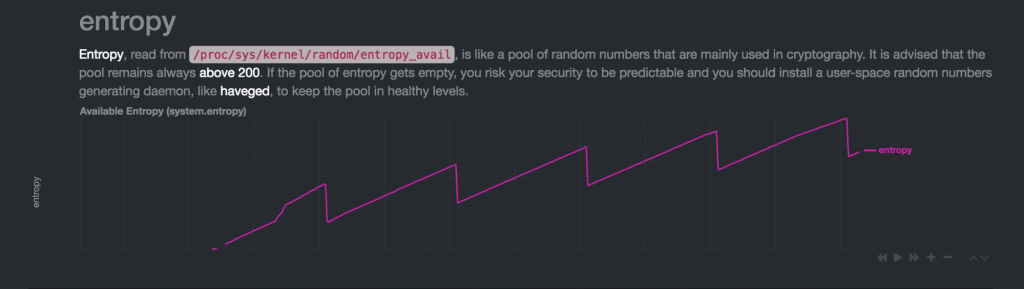

figure from stackoverflow.com asked by techraf (https://security.stackexchange.com/questions/126875/whats-eating-my-entropy-or-what-does-entropy-avail-really-show)

I need to find out the answer to the next natural question – how many bits should there be in the entropy pool, and who needs these random numbers? It turns out random numbers being used quite a lot in the Linux system e.g. TCP/IP ISN (Initial Sequence Number) by the kernel, for message ID in some mail system, for key management in SSH, for SSL/TLS or for session cookie in some PHP/Web applications and a lot more. The number of bits in entropy pool to be used also depend on the implementation of programming languages and how application software implements random number generation, for example, mod_auth_digest (the version I use) needs (and takes away from entropy pool) 20-bytes or 160-bits. Entropy pool size (full) is 4096-bit or 512-bytes [[ NOTE: size is adjustable using: sysctl -w kernel.random.poolsize=xxxx, the default is 4096 ]] and should not get below 200-bits (or >= 1024-bits if possible), entropy_avail in the range of 100s will potentially cause a delay for certain applications and operations due to blocking of /dev/random.

Epilogue

I decided to install ‘haveged’ to boost the entropy pool and immediately after starting the haveged, entropy_avail becomes 4096-bits (albeit only once and never again!) from 30-bits before installation. Living with this Ubuntu VM should be much easier now!

If you are interested in Linux /dev/random and PRNG, reference [6] should be a good read then follow with the one in Wikipedia (reference [5]).

References

- Linux Administration Site (https://linadmin.wordpress.com/2009/06/22/apache2-hangs-with-digest-generating-secret-for-digest-authentication/)

- Myths about /dev/urandom by Thomas Hühn (http://www.2uo.de/myths-about-urandom)

- Haveged – a simple entropy daemon (http://www.issihosts.com/haveged/)

- Timer entropy daemon (https://www.vanheusden.com/te/)

- Wikipedia on /dev/random (https://en.wikipedia.org/wiki//dev/random)

- Linux Pseudorandom Number Generator Revisited (https://eprint.iacr.org/2012/251.pdf)