Recently, I have encountered an issue from one of my client ISP in Thailand where they are using our NAT product. The conversation start like.

“Well OK, What’s the problem sir?” I asked.

“Our client reported to us that their internet connection is slow. Can you identify what is the main cause of the problem?” He said

“Before we using your product client got their own public IP address and there were no issue like this before” He added

“I will sir” I replied right away.

A little background on NAT, NAT stands for Network Address Translation (NAT) where you can assign one public IP for one PC or multiple PC in your private network to be able to access the internet. A good easy explanation can be found in this site https://whatismyipaddress.com/nat or long read on RFC2663

So, in this case our ISP (Internet Service Provider) conserve public IP by using NAT. Their client thus sharing IP together to access the internet.

Life would be easy if that was all but nope. Their client sharing IP as well as sharing TCP/UDP ports (64,511 port which is 65,535-1024 ports). The problem is TCP/UDP ports each user is sharing but why is it a problem? Let’s dig in!!

Photo from https://www.drivers.com/update/pc-fix-tips/10-tips-to-a-faster-pc/

Above picture might seem familiar to you when you’re trying to access view YouTube video, Playing online games, viewing Live stream or anything external to your own network that need internet.

In micro-level this happens to you as individual but in macro-level like your ISP this is port’s allocation problem. As I said earlier, NAT makes user sharing IP and sharing TCP/UDP ports. If ISP setting is 1 IP address per 40 users, which mean 64,511 ports would be share among 40 users. The things is if one user use 5,000 TCP/UDP ports, there would be 59,511 for 39 other users. No problem right? Imagine, all 40 users want to use 5,000 port at the same time. What would happen? There will be no port available.

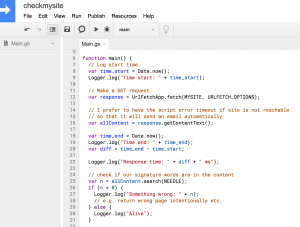

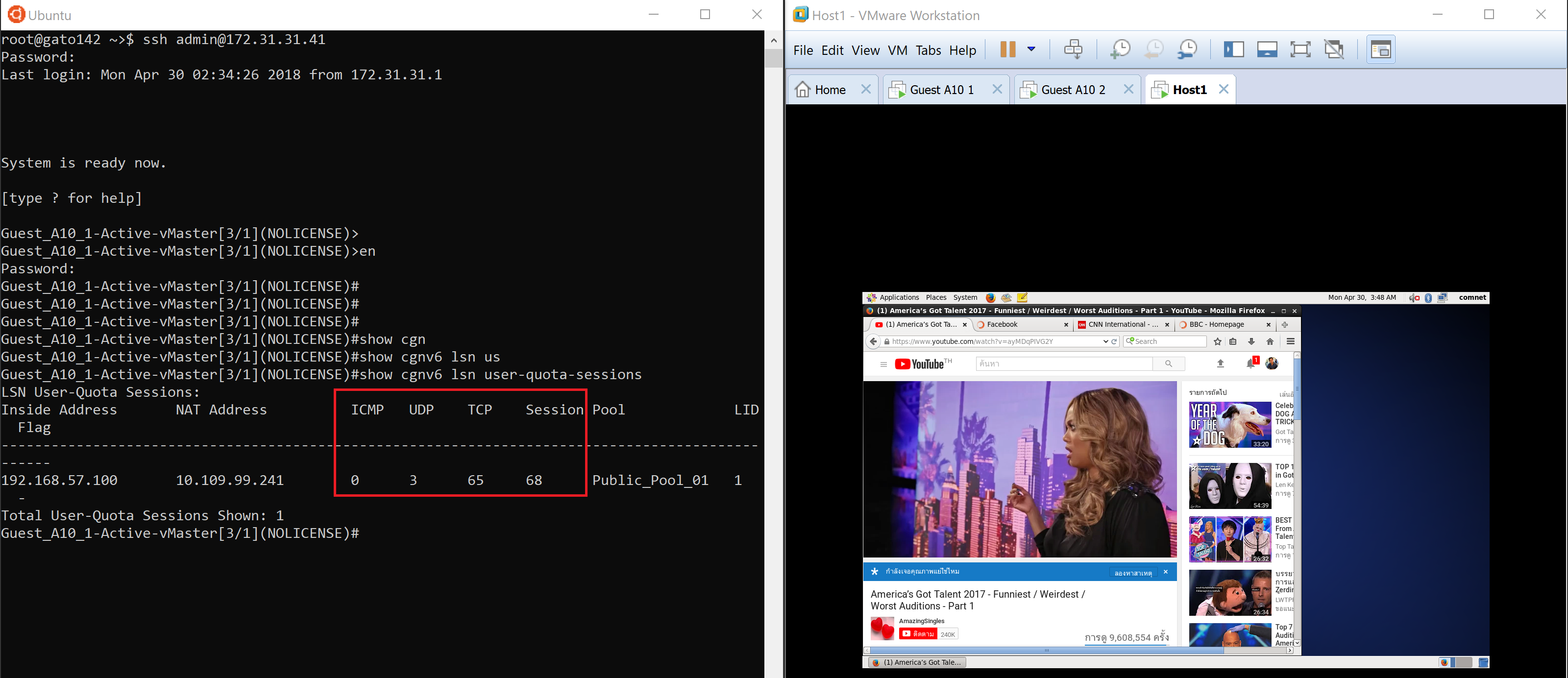

Typically, normal user would use not more than 200 TCP and UDP ports. I set up a lap for NAT using A10 virtual machine to demonstrate how much TCP/UDP port on one user surfing internet such as Facebook, YouTube, and browsing web. The result is pretty straight forward. TCP/UDP ports used are incredible low.

It shows the use of only 3 UDP ports and 65 TCP ports. Session is not related to the resource of the port because you can have multiple sessions for each TCP or UDP port.

I figured if I tested by playing online game TCP/UDP ports would bump up much higher.

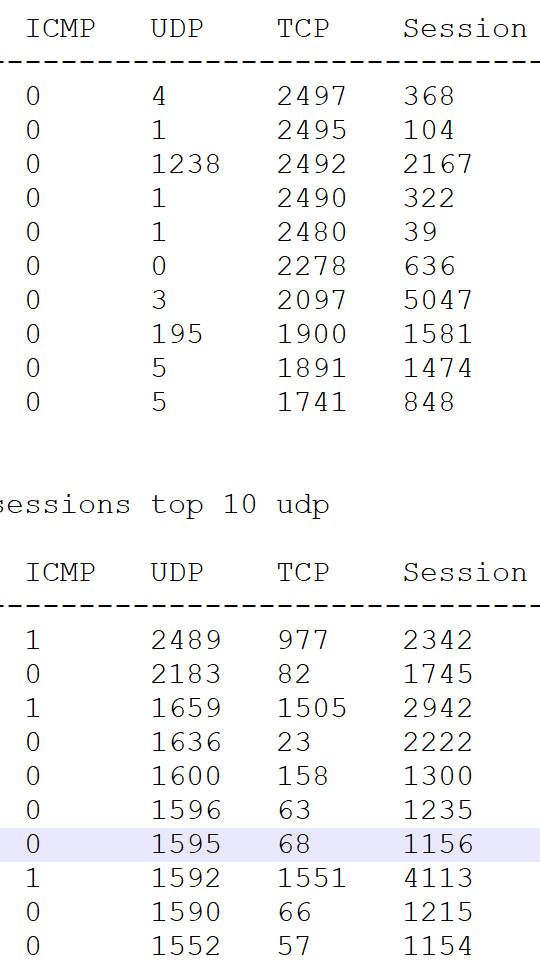

However, the result of one user looks normal but when I compare to my client’s environment, I see something strange. Top TCP and UDP ports’ users were up to the maximum configured 2,500 ports, I have applied. How come one user can used up to 2,497 TCP ports?

However, the result of one user looks normal but when I compare to my client’s environment, I see something strange. Top TCP and UDP ports’ users were up to the maximum configured 2,500 ports, I have applied. How come one user can used up to 2,497 TCP ports?

What happen was some user might get infected by malware and caused them to use more ports either by forces or hacker exploiting them. There are many malware known in today’s world. Take a peek at some of them in this link https://www.veracode.com/blog/2012/10/common-malware-types-cybersecurity-101

I found some of the most common DDoS attack. If your computer is infected, you might become botnet and control by hacker which use your computer to carry an attack you didn’t know. Cyber war is happening every day. You may not realize. Looks at the ThreatMap here from our supplier Fortinet https://threatmap.fortiguard.com/ which show a pretty cool geographic map of an real time attack.

In the end, when you experience slow internet uses, check yourself first that you’re guard with Anti-Malware and your Firewall is turn on. It is crucial that no one is exploiting your device and slowing you down. You pay what you want and you will get what you want when you secure yourself.